Eloquent Images by Gary Hart

Insight, information, and inspiration for the inquisitive nature photographer

An Old Friend Returns

Posted on May 22, 2026

Moonlight Prism, Lower Yosemite Fall Moonbow, Yosemite

Sony α1

Sony 14mm f/1.8 GM

ISO 1600

f/1.8

4 seconds

Has anyone noticed that Yosemite becomes a completely different park with each season? I feel quite fortunate to live close enough that I’m able to enjoy each Yosemite season, and to offer Yosemite workshops in three of four seasons. (Actually, you can probably infer that I live close enough to offer workshops in all four seasons, but I leave summer to the tourists.)

Each Yosemite season offers its own distinguishing qualities. In autumn, though the falls are nearly dry (Bridalveil still trickles), red and gold leaves fill the trees, carpet the ground, and reflect gloriously in the low and slow Merced River. Winter is when the waterfalls return to life, and also the best time to find Yosemite draped in powdery white—but since snow is never guaranteed, I always schedule a workshop around the natural firefall light on Horsetail Fall (while still crossing my fingers for snow). Spring is the Yosemite of postcards and calendars, when waterfalls peak, dogwood blooms abound, low-lying meadows host (reflective) vernal pools, and rainbows color the waterfalls.

Each of these Yosemite workshops has a potential bonus lunar event that I try to include when it corresponds with the season’s primary distinguishing quality. Many autumns and winters I can align a rising full moon with Half Dome at sunset, and spring is when the light of a full moon paints a rainbow at the base of Lower Yosemite Fall.

For years I scheduled two workshops around this moonbow, but finally decided that the window for the absolute best moonbow experience is open only during a four week span from early April into early May. Though a moonbow also happens with the full moon from late March and into June, in late March and early April the moonbow appears in the mist billowing too far left of the fall; later in May and into June, the crowds swarming Yosemite Valley make an unpleasant experience for everyone.

Despite the remarkably predictable moon/landscape geometry that creates a moonbow, its appearance is never certain. Clouds are the biggest nemesis, but low water can also diminish the experience. Coming into this year, limiting my moonbow workshops to a single full moon in that four-week window, combined with factors beyond my control (in addition to clouds, you can add a sudden park closure and global pandemic), has meant that my previous successful moonbow sighting was in 2019. And for a while, it appeared 2026 would continue that streak of futility.

There was a time when I didn’t believe it possible for Yosemite’s spring runoff to be so low that the moonbow would essentially be erased. But as California’s wet season progressed and the Sierra Nevada range found itself on the way to an historically poor snowpack year, I couldn’t help flashing back to 2015. That’s the year PBS Newshour arranged for a film crew to follow me and my Yosemite spring workshop group as we photographed Yosemite’s spring splendor, with a particular emphasis on the moonbow. But 2015 also happened to be the year an unprecedented drought shrunk Yosemite’s normally booming spring waterfalls to mere trickles. Rather than cancel the Newshour segment and keep the film crew home, they adroitly pivoted to a piece on California’s drought and its impact on Yosemite. Check it out. (FYI, I haven’t aged a bit since then.)

Would history repeat this year? As April approached, the answer appeared to be yes. Then a series of unseasonably cold storms arrived, filling our rivers and bolstering the Sierra snowpack just in the nick of time. A couple of days before the workshop (scheduled for the last four days of April), another storm landed, further recharging the falls and even lingering through the workshop’s first couple of days—just enough rain and clouds to provide excellent photography, but not enough to wash us out. Suddenly, my concern wasn’t that there would be enough water, it was whether the clouds would depart in time for my planned Wednesday night moonbow shoot.

Even with all this last-minute moisture, spring 2026 was not especially wet in Yosemite. Though still flowing beautifully, all the falls were noticeably on the low side of average for this time of year (peak runoff is usually around May 1). Nevertheless, there was more than enough water exploding on the rocks beneath the falls to form the billowing mist Yosemite’s signature waterfall rainbows require.

Throughout the workshop my group enjoyed an assortment of daylight rainbows, from various vantage points (I have the timing down to the minute for each location, creating the illusion that I’m much smart than I am), but it was the moonbow everyone was crossing their fingers for. I had one person in the group who had already taken this workshop twice, each time with the expressed desire to photograph the moonbow. With Kent was returning for a third attempt, the pressure was on and I was pretty committed to making it happen for him if at all possible.

Since the moonlight timing and angle would be best on Wednesday, the workshop’s penultimate night, I tried to get everyone up to speed on moonlight and moonbow photography during that afternoon’s training session. The evening’s sunset shoot featured the full moon rising over Bridalveil Fall, photographed from an elevated turnout on Big Oak Flat Road. As soon as we finished there, I zipped the group back down to the Yosemite Valley Lodge parking lot, where we grabbed our gear and made the short walk, in the gathering dark, up to the bridge at the base of Lower Yosemite Fall.

The below average flow in the fall meant that this year’s moonbow wouldn’t be as big, or last as long, as it does in the wettest years. That’s because less water means a smaller cloud of mist for the bow to form in, not only shrinking the moonbow’s breadth, but also terminating the show sooner, as the rising moon shifts the necessary 42 degree rainbow angle downward and eventually out of the mist. (Rainbows drop as the sun or moon rises—read more about the geometry of rainbows on my blog) But this year’s moonbow was plenty big enough to thrill everyone, providing about 40 minutes of quality photography between the time the sky was dark enough for the moonbow to appear, and when it dropped out of the mist.

Even with less water than usual, the moonbow was obvious to the unaided eye as a shimmering silver band. And the rainbow colors were clearly visible in our mirrorless viewfinders or live-view LCD screens, even before a picture was captured.

The diminished flow in Yosemite Fall had one major advantage: at no point did we feel like we were photographing in a rainstorm. Every once in a while we’d get sprinkled with a small amount of mist, but I’ve photographed the moonbow from here when everyone had to don rain gear, and even a single 5-second exposure—that started with a dry lens—would finish with the front lens element completely misted over. When it gets like this, the only way to do it is with an umbrella in one hand and a towel in the other.

There were quite a few people the bridge this evening, but I’ve seen far more here. We’d become a little scattered on the walk up to the fall, so it took me a little while in the darkness to ensure everyone in my group had found a suitable spot to set up. Once I was confident my group was positioned satisfactorily, I tried to get around to everyone to make sure they were doing okay.

Exposure for the moonbow is pretty easy, and I’d given them settings to use before we started. Composition is a little tougher given the limited light, but I’d very strongly encouraged everyone to put their lens at its widest focal length and leave it there—this simplifies things, and today’s digital cameras have more than enough resolution to allow ample cropping later.

Not only does shooting wide streamline composition in the dark, it simplifies the most challenging aspect of night photography: focus. Since changing focal length requires refocusing, and finding focus in the dark is not easy, once you’ve achieved sharpness you don’t want to do it again. Most of my time this evening was spent moving around between the members of my group, helping them get focused, or checking their focus to make sure it was good. I started with Kent, but eventually made it around to nearly everyone (and even helped one or two people who weren’t in my group).

Eventually I found a few minutes for some frames of my own, squeezing in between a member of my group and another person who was okay with me and my tripod up in his space (I checked). I take both horizontal and vertical versions of virtually every scene I photograph, but I always photograph the moonbow vertically because I just haven’t found a horizontal composition that pleases me. For starters, I want to include as much sky as possible, and I think Yosemite Creek churning through granite boulders is a far more interesting than the trees on the left and right. This evening, I used my 14mm prime lens, enabling me to include a lot of starry sky above the fall (including 5/7 of the Big Dipper), while still getting plenty of moonlit creek and granite beneath it.

You can tell that I captured this toward the of the moonbow window by how low the moonbow is. When we arrived, it hovered above the visible mist, just below the top of the lower section (where the fall starts to spread). So even though my moonbow is not quite as broad as the earlier ones, it is brighter, thanks to all that water.

I should probably add a few words about my exposure. I started doing moonlight photography about 20 years ago, and established my full moonlight exposure values very early on. Back then, then ISO 400 was about as high as I could go without noticeable noise; since my fastest lens at the beginning of my digital years was f/4, so my exposure settings were usually in the ballpark of ISO 400, f/4, 30 seconds.

The problem with 30 seconds is you get a little star movement—not a deal-breaker, but enough to be visible if you look closely. So as sensor technology improved, and I acquired faster lenses, my ISOs went up and my shutter speeds dropped, while my exposure values (amount of light captured) remained constant. For this one, I used f/1.8, ISO 1600, and 5 seconds.

With limited time, and even more limited ability to move around, I still managed to get a handful of frames this night. But that was fine because my photography is never the priority in a workshop (and I certainly don’t lack for moonbow images from past years). Even though this year’s version may not be my best moonbow shot ever, I’m still pretty pleased with my results.

In the image review the next day, I invited everyone to share a moonbow image in addition to their review image—it was wonderful to see that everyone had a success! That includes Kent, who had to leave the workshop early, but who reported to me that his moonbow image is beautiful and he’ll no longer need to repeat the course.

Workshop Schedule || Purchase Prints || Instagram

Yosemite Falls, Day and Night

Category: Moonbow, Moonlight, rainbow, Sony 14mm f/1.8 GM, Sony Alpha 1, waterfall, Yosemite, Yosemite Falls Tagged: Lower Yosemite Fall, moonbow, moonlight, nature photography, Rainbow, Yosemite, Yosemite Falls

More Than a Pretty Picture

Posted on April 26, 2026

Morning Light, Half Dome and Merced River, Yosemite

Sony a7R V

Sony 24-105 G

1/25 seconds

F/11

ISO 100

Before exploring for the scene that ultimately delivered the image in my prior blog post, I got my February group set up at what I’ve always felt was the primary view at this location. With Half Dome framed on the left by towering evergreens, on the right by a long diagonal ridge, and the tree-lined Merced River in the foreground, this spot has all the landscape ingredients a beautiful image needs. Stir in fresh snow, translucent clouds, and warm sunlight, and the beauty is ratcheted off the charts.

I interrupt this photo blog to share a little about what’s been disrupting my life this week: a “minor” home remodel. In the grand scheme of things you can do to improve a house, upgrading kitchen cabinets (completely new exteriors, all new drawers, pull-out shelves) is no big deal. But anything that requires my wife and me to completely pack up the kitchen and basically camp out in our living room at least feels quite major.

Before the installers even started, our preparation included emptying the original cabinets into boxes, relocating our refrigerator to the dining room, removing the above-range microwave, and expanding the dining room table enough to host our kitchen essentials—convection oven, microwave, espresso machine, and Vitamix—while somehow leaving just enough remaining space for meal prep and dining for two.

Suddenly, our entire downstairs was a an obstacle course of boxes and countertop items (who knew a relatively small kitchen could hold so much?). My wife and I both work from home, but while I could retreat to my upstairs office, her workspace was downstairs amidst the mayhem. To get any work done amidst the din of power saws and sanders, each of us had to resort to noise-cancelling headphones at multiple points.

I’m happy to report that the just-completed cabinets exceed our lofty expectations, and the cars are back in the garage where they belong. On the other hand, at least half of our stuff is still in boxes as we meticulously unpack and reorganize our “new” kitchen.

Since every hardship is a learning opportunity, here are the things this experience taught us to never take for granted again: a kitchen sink, a dishwasher, parking inside, on-demand filtered water straight from the fridge, and not having to rummage through boxes to find that thing we never imagined we should leave out (cheese grater, coffee filters, 1/4 measuring cup, and on, and on…).

Next up? Hmmm, this 20-year old interior paint is starting to look a little dated…

So, anyway…

Finding the confluence of all these landscape and atmospheric elements is the stuff landscape photographers dream of. But I think far too many, when gifted this opportunity, simply settle for capturing the beautiful scene. (Not that there’s anything wrong with that.) In so doing, they miss an opportunity to elevate their images something extraordinary.

I see examples of this kind of settling everywhere. Whether it’s social media, hotel room “art,” screensavers, calendars, postcards, or any other medium that displays beautiful landscape photography, I can’t help shaking my head at clearly beautiful scenes that could have made much better images had the photographer taken a few simple steps.

It seems almost as if they said, “Wow, this is so beautiful, all I have to do is click my shutter before it goes away.” And if your only goal is to save the moment, read no further. But to my mind, the more beautiful a scene, the more important it is to squeeze every ounce of beauty from it. I could probably go on for hours on this topic, but I’ll try to distill my thoughts down to a few basic points.

Foremost is the need to be aware of the way the viewer’s eye moves through the frame. When I decide a scene is worth photographing, I start by identifying what I want the image to be about—a spectacular view, a specific subject, a collection of subjects, beautiful light, and so on (or some combination of these)—then identify the best way to guide my viewers’ eyes there.

With the “about” decided, I survey the scene to identify elements that possess “visual weight”—objects or features that pull the eye like gravity pulls celestial objects. Qualities that give an object visual weight include size, brightness, contrast, color, position in the scene, or any other characteristic that makes something stand out from its surroundings.

The value (in an image) of an object possessing visual weight isn’t necessarily a function of the object’s aesthetic appeal. A very ordinary feature in the right position qualifies as a desirable VW feature when it serves a scene’s most striking element, either by creating a balance point, by completing a virtual line that connects to the primary subject or other VW object, or through some combination of these. On the other hand, a beautiful but poorly positioned feature could actually work against the scene’s primary subject.

Undesirable objects with visual weight draw the eye away from the focal point of the image. I try to compose these out of the scene, or deemphasize them in the composition—for example, putting them in a less prominent location, burying them in the foreground of a silhouette, or deemphasizing them with soft focus. When none of those options are available, burning (darkening) the offending object in processing often works wonders.

Viewers subconsciously draw virtual lines connecting objects with visual weight. Desirable objects with visual weight can be “connected” virtually by creating appealing positional relationships. I’m especially drawn to diagonal connections between these objects, and look to create them whenever possible.

Another frequently overlooked aspect of “pretty scene” pictures that fall short of their potential is distracting elements that pull the eye from whatever the scene is supposed to be about. In addition to, and often even worse than, misplaced visual weight objects in the main part of the scene, is messy borders. Since the visual weight of objects seems to increase on the border of the frame (this is just a personal observation that feel pretty strongly about), I always strive for clean borders by avoiding cutting things off (most of it in the frame, but just a little piece missing), or having them jut in (most of an object outside the frame, with just a small piece visible).

But since we’re photographing the natural world, scenes usually don’t cooperate, often making it impossible to avoid objects cut off or jutting in at the edges of the frame. In that case, it’s most important to make cutting your border objects a conscious choice, rather than not checking at all and placing the border wherever it happens to fall while you concentrate on the main part of the scene. This border awareness includes clouds at the top of the frame, which I find to be an especially overlooked flaw that’s usually a pretty easy to fix—if you make the effort to look.

In the Half Dome image above, in a very general sense this was the first composition I saw when I arrived here. But not wanting to settle for the (undeniably) pretty scene, I went to work finding my about and visual weight objects and overall framing. Half Dome was the obvious “about” choice, but I also wanted to feature the snow and morning light in the clouds.

The first thing I noticed when I framed up something that featured these elements while composing wide enough to include the river too, was the log jutting in on the lower left. Eliminating it completely also eliminated the best part of the river, so I went with Plan B: composing wide enough to make the log one of my VW objects, taking it off the border and far enough into the scene to create a nice diagonal connection with Half Dome.

Including all of the rock (from which the log emerges) meant going much wider than I wanted to, and introduced other undesirable elements, like other workshop students (I know what you’re thinking: no, the students were not undesirable, I just didn’t want them in my frame). But I got enough of the rock so it didn’t appear to be an afterthought, making sure not cut off that small, horizontal patch of snow beneath the (unavoidable) snowy cap.

The right side of my frame was determined by a protruding branch that I didn’t want to include. With the left and right setting my focal length, I just had to aim my camera up and down until I found the right combination of foreground snow below, and translucent clouds above.

Assembly Required

Click any image to scroll through the gallery LARGE

Category: Half Dome, How-to, Merced River Canyon, snow, Sony 24-105 f/4 G, Sony a7R V, winter, Yosemite Tagged: Half Dome, Leidig Meadow, Merced River, nature photography, snow, winter, Yosemite

Who’s Counting?

Posted on April 18, 2026

Winter Morning, Half Dome and Merced River, Yosemite

Sony a7R V

Sony 24-105 G

1/25 seconds

F/11

ISO 100

I get a lot of questions during a photo workshop, but about 80% of them are some version of, “Should I do it this way or that way?”:

- “Should I shoot this with a wide or telephoto lens?”

- “Should I shoot this horizontal or vertical?”

- “Should I include that rock or leave it out?”

- “Should I polarize this or not?”

- “Should I freeze or blur the waterfall?”

- “Should I…?”

Some photographers are so paralyzed by these choices, they choose to do nothing rather than make a “mistake.” They forget that, as with every other artistic endeavor, in photography there’s no universal right or wrong, no consensus on the best way to render a scene.

Other photographers are inhibited by the subconscious need to conserve resources at all costs. That need to conservative probably started way back in our childhood, when we were constantly warned not to waste: clean your plate, turn off the light when you leave the room, don’t leave the water running, and a host of other waste-related proclamations are a right of passage for American (and likely everywhere else) youth.

Adding to our formative-years’ “don’t waste” anxiety, when film shooters graduated to our first “grown-up” camera (one that didn’t involve a film cartridge and pop-on flash cube—I’m looking at you, Kodak 104), after being rendered destitute by our complex new equipment, we were suddenly punched in the wallet again (and again, and again…) by the perpetual expense of film and processing. It’s no wonder we grew accustomed to sparing every frame, an inclination that for most became ingrained.

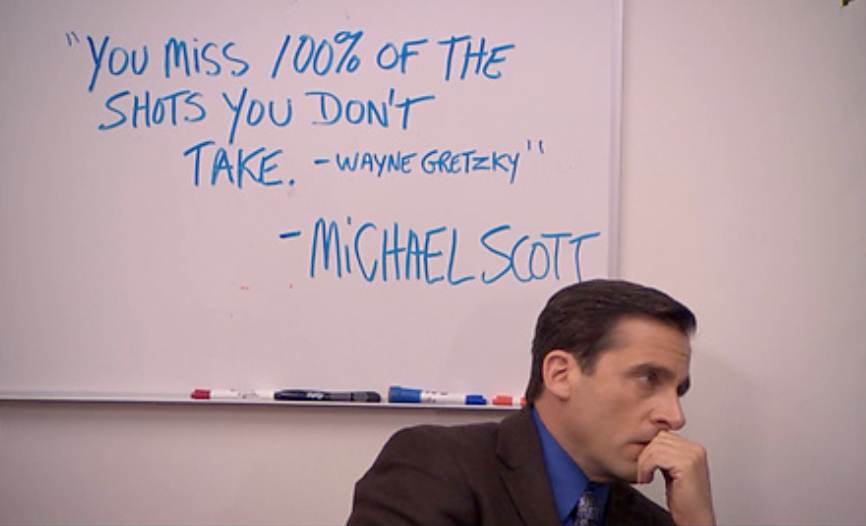

Conserving resources is certainly important, but that parsimony shouldn’t come at the expense of your photographic success. In the immortal words of Michael Scott:

Still not convinced? Here’s a paradigm bending insight that might help: While every click with a film camera costs money (film and processing), every click with a digital camera increases the return on your investment. That’s right: each time you take a picture with your digital camera, your cost per click drops. So click freely and stop counting—there’s no limit to the number of pictures it takes to get to the one you’re hoping for.

I’m not suggesting that you put your camera in continuous shooting mode and fire away*. But I am encouraging you to shoot liberally, the more the better—albeit with a purpose. And there’s no law saying that purpose must be a successful image.

A shutter click can just be a way to get in the mood, or to determine whether there really is a shot there (I don’t always know whether a scene is worth shooting until I’ve clicked a couple of frames), or simply an experiment.

Following that mindset, I frequently play “what-if?” games with my camera: “What if I do this?” I’d be mortified if people saw some of these what-if? images, but I do usually learn something from even the worst of them. Often that learning is simply what not to do, because a failure is at least a way to understand why something didn’t work, and often leads to ideas for how it might work the next time.

Even when a scene is so beautiful that a successful picture feels inevitable, I always consider my first click a draft: rather than a completed masterpiece, my goal for the first few clicks of a scene is to establish a foundation that I can incrementally improve until I’m satisfied the finished product is as “perfect” as it can be.

When I’m not sure of the best way to handle a scene, I shoot it multiple ways, deferring the decision until I view the images on a large monitor. Not only using a variety of compositions, but a variety of depth, exposure, and motion effects as well.

And never settle for just one excellent image. When photographing a scene that truly thrills you, slow down and shoot it with as much variety as possible: horizontal/vertical, wide/tight, as well as multiple foregrounds, backgrounds, and framing—as many variations as you can come up with. I mean, you never know when a magazine might want to put a vertical version of that horizontal Half Dome in the snow image on their cover—even if it’s not obvious at first, most great horizontal scenes have great vertical scenes as well (and vice-versa).

Which brings me to today’s image of, not coincidentally, Half Dome in the snow.

This was the first morning of the workshop formerly known as “Yosemite Horsetail Fall.” Click the images below to read more (I’ll still be here when you get back):

Circling Yosemite Valley, we feasted our eyes on the new snow covering every exposed surface. My job was to find the best views to put with all that still pristine snow. Beauty surrounded us, but with filling the bowl of Yosemite Valley, views beyond 100 yards had disappeared.

Approaching Sentinel Bridge, I glimpsed Half Dome peeking through the clouds; my instant inclination was to pull into the Sentinel Bridge parking lot, but we found the lot covered with a foot of overnight snow still waiting for the day’s first snowplow. I was pretty sure my Outback could handle it, but I was less confident about the other two cars in our caravan. So I crossed my fingers that Half Dome would hang in a little longer and continued toward another favorite, and less known, view of Half Dome.

We found the parking at this next spot, about a mile beyond Yosemite Lodge, a little less problematic. The downside here was that getting to the view requires a (roughly) quarter mile “hike” on a flat and normally well-worn riverside trail. But of course that trail was now obscured by at least a foot of fresh powder. Since I was the only one who knew where we were going, it fell to me to blaze a new trail. Concerned about missing the window to photograph Half Dome before it disappeared again, I quickly grabbed my camera bag and headed through the forest as fast as the snow allowed, my group in tow.

At first the going was pretty manageable, but whenever we exited the evergreen canopy into a more open stretch, the powder doubled and I sunk in above my knees with each step. Normally when leading a group to a new spot, I need to take care not to walk too fast, lest those not familiar with the route lose track of me. But battling through the snow slowed me enough to allow everyone drafting behind me to keep up—and even if someone did fall a little behind, they’d have no problem following the path cut by the rest of us.

Needless to say, bundled for winter and hurrying as quickly as I could, I worked up a real sweat in that quarter mile. The rest of the group wasn’t far behind, and we shared the thrill of the workshop’s first peek at Half Dome, never a certainty in stormy weather. We photographed here for nearly an hour, watching Half Dome disappear and emerge from the clouds many times, creating new opportunities every minute, and also a constant reminder Half Dome could disappear for good any second.

To my eye, the obvious composition was horizontal, with a foreground that included the river (with a partial reflection) and lots of snow-draped trees and rocks. But after working on many versions of that scene, including some vertical versions, I went exploring to see what else I could find.

Less than 20 feet from my original spot, I found this view of Half Dome framed by snowy trees and the graceful curves of drifting snow. I tried many versions of this scene as well, both horizontal and vertical, before landing on this one that was a little tighter than most of the other frames I’d come up with.

In the dozens of photos I came away with are probably more clunkers than classics, but I don’t care. And honestly, this was one of those extra frames that I forced myself to shoot because the scene was too nice to quit, not because I saw something special—it wasn’t until I reviewed my images on my big monitor at home that I realized it was an image worth processing and sharing. (And I know there are probably more keepers in this morning’s folder, just waiting for me to uncover.)

Photography often requires instantaneous choices, and Nature doesn’t usually wait until you’re ready. Just because you’re not sure what you’ll end up with, or don’t have a pro photographer whispering guidance and reassurance in your ear, doesn’t mean you should stop shooting. Even if you don’t see any winners at the time, at the very least you’ll learn something—and who knows, you might just surprise yourself later.

* True story: I once had a woman in a workshop put her Nikon D4 in continuous shooting mode, hold the camera in front of her, depress the shutter button, and spin. When I asked her what in the world she was doing, she replied, “It’s Yosemite—there’s bound to be something good in there.”

Workshop Schedule || Purchase Prints || Instagram

Half Dome Views

Click any image to scroll through the gallery LARGE

Category: Half Dome, Leidig Meadow, snow, Sony 24-105 f/4 G, Sony a7R V, winter, Yosemite Tagged: Half Dome, nature photography, snow, winter, Yosemite

Yosemite at its Best

Posted on April 5, 2026

White Gold, El Capitan and the Three Brothers, Yosemite

Sony α1

Sony 16-35 GM II

1/100 second

F/10

ISO 100

If anyone had told me that my annual Yosemite Horsetail Fall photo workshop would get no opportunity to photograph the molten sunset light on El Capitan; that many of my go-to locations, including Tunnel View, would be inaccessible for the entire workshop; that Half Dome would be shrouded in clouds for all but a few hours; that the park would actually shut down the afternoon before our final day, I’d have started preparing to placate a lot of disappointed photographers. Instead, though all of that did in fact come true, this group got to see Yosemite at its absolute best.

Rather than the clear skies and sunset fire every Horsetail chaser prays for, the day before the workshop a series of cold winter storms descended on Yosemite, obscuring the sun and delivering more snow than I’ve ever had to deal with in 20 years of Yosemite photo workshops. In fact, I can’t think of any workshop at any location, including Iceland and New Zealand in winter, that had this much snow.

With all this white stuff came all the inconveniences you might imagine (and some you might not): challenging driving, difficult (to impossible) access to many photo sites, chilly photography conditions, wet clothes and gear, and vanishing Yosemite icons. Not only were some of my favorite views inaccessible, the views that were accessible aren’t much use when the featured monolith or waterfall isn’t visible.

Some of my workshops locations are so spread out, I don’t have a lot of location timing flexibility. But Yosemite Valley’s compactness enables me to change plans on the fly. I start each workshop with a mental list of must-see locations, plus a list of secondary and tertiary locations to augment the prime spots as schedule permits—exactly when we get to these locations depends on the conditions. But all this workshop’s snow really forced me to dig deep into my (lifetime’s worth) bag of location tricks.

One of my favorite locations to take my groups is a riverside view of El Capitan that has been unofficially, and affectionately, dubbed “Tahiti Beach.” Though no secret to photographers, being a little bit off the road with no obvious trail to the river makes Tahiti Beach relatively free of tourists. But if you’ve been in one of my Yosemite workshops, you’ve been here. Not just a great El Capitan view, it’s hands-down the best Yosemite Valley view of the Three Brothers. And if that’s not enough, Tahiti Beach’s proximity to an especially flat stretch of the Merced River means great reflections. (Continued below)

“Tahiti Beach”

Throughout Yosemite, the best Merced River reflections are possible when the spring snowmelt has subsided and the rushing Merced has slowed to a more leisurely pace—that’s usually from mid-summer through early the following spring. That’s the case at Tahiti Beach too, but if you’re especially lucky, you’ll find yourself here at peak spring runoff following a wet winter, usually sometime in May, when the river rises enough to leave its banks and flood the meadow and form a shallow, perfectly still reflective pool.

Tahiti Beach can be very nice in late afternoon light, but I’m especially fond of the morning’s first sun on El Capitan, and the opportunity to add a reflection makes this one of my favorite spots for that. In a normal Yosemite workshop, conditions are predictable enough that I can get my group to each of my prime locations in the best conditions, and Tahiti beach is often on the menu for our second morning.

This year, a look at the forecast was enough to know that the conventional location rules would be completely different for this workshop, and I emphasized in the orientation that we’d need to be quick on our feet to adjust to rapidly changing conditions. That reality became immediately clear from the instant we set out for our first shoot, and was further reinforced the following morning, when my plans were immediately thwarted by closed roads and low clouds at several of my first-choice locations.

Refusing to be defeated, we slowly circled the valley, waiting for the inevitable clearing. I eventually took everyone on a short but sweet hike to an off-the-beaten-path spot where we enjoyed a brief but beautiful view of Half Dome before the clouds lowered again. Leaving there in very limited visibility, my plan was to circle back to the Lower Yosemite Fall trail, hoping that we might be able to get close enough to the fall to photograph it through the low clouds. I was afraid that this driving and waiting for openings was frustrating my group, but took heart in their unbridled awe for the beauty surrounding us.

Most of Yosemite Valley is navigated via a pair one-way roads: eastbound Southside Drive for those entering the park; westbound Northside Drive for those exiting; and a mid-point crossover to shortcut the loop. As we navigated the crossover and headed back east on Southside Drive, I saw hints that El Capitan might soon emerge and made a quick decision to pull over at the parking area for Tahiti Beach. Tahiti Beach wasn’t part of my plan for this morning, but I knew there were no more good views of El Capitan beyond here.

I parked and exited my car, and told everyone to stay put while I surveyed the scene. Though access to Tahiti Beach isn’t treacherous, even in good conditions it can be a little problematic for people with mobility problems—fortunately, multiple routes down to the river that range from short-but-steep to long-but-gradual allow me to offer my group multiple choice. But this morning I also had to factor in all this fresh snow that meant whichever route we chose, we’d be blazing a new trail.

About the time I decided I probably could get everyone down to the river, El Capitan and the Three Brothers popped out of the clouds. Though this roadside parking area provides nice views of El Capitan and the Three Brothers, its foreground—a scrubby meadow filled with similarly scrubby shrubs and small trees—can’t compete with the reflections possible at the river. But the snow had erased all of the negatives, replacing it with an undulating carpet of pristine white. Since there was no telling how long the increasingly spectacular El Capitan and Three Brothers view would last, I made a snap decision to not attempt to get to the river and just shoot from here.

Within minutes a shaft of warm sunlight split the swirling clouds to spotlight El Capitan, and I knew I’d made the right call. That was further validated when the direct light disappeared for good within a few minutes. Fortunately, the clouds stayed open long enough for everyone to get a wonderful assortment of beautiful and truly unique images of two Yosemite icons.

This workshop was filled with stories like this: frustrating disappearances, surprise appearances, sudden adjustments to plans, and ubiquitous beauty. Through it all, my group responded with euphoric enthusiasm, ignoring minor discomfort and inconvenience. Despite ending a day early, we all came away with memory cards filled with one-of-a-kind Yosemite images—no small feat in one of the most photographed places on Earth.

Workshop Schedule || Purchase Prints || Instagram

The Many Faces of El Capitan

Click any image to scroll through the gallery LARGE

Category: El Capitan, snow, Sony Alpha 1, Sony/Zeiss 16-35 f4, Three Brothers, winter Tagged: El Capitan, nature photography, snow, Three Brothers, winter, Yosemite

Still Learning

Posted on March 31, 2026

Aurora and Big Dipper, Dimmuborgir Lava Fields, Iceland

Sony α1

Sony 14mm f/1.8 GM

ISO 4000

f/1.8

4 seconds

Whether it’s rafting Grand Canyon, gaping at a comet, or chasing supercells and tornados across the Midwest, instead of scratching an itch and moving on (as I’d expected would happen), checking-off a bucket-list item only seems to fuel my desire for more.

Case in point

I saw my first aurora in 2019. As with all my prior bucket-list experiences, the aurora experience actually exceeded my lofty expectations. Puzzling over why sights I’ve dreamed of for so long so consistently exceed my expectations helped me appreciate the power of experience over simple observation. For me, the experience component—that feeling like I’m part of something—is what motivates me to learn as much as I can about my subjects. Speaking only for myself (your results may vary), simply photographing beauty without taking time to understand just feels superficial.

Though I’d done a little research on auroras before my first Iceland visit, that obsession to truly understand what was going on didn’t fully kick in until I actually stood beneath those multi-colored shafts and sheets and watched them twist and fold above my head. Game on.

I learned about solar cycles, solar storms, the solar wind, Earth’s polarity, the magnetosphere, the magnetotail, ionization of atmospheric molecules, and how all these elements conspire to put on this dazzling show. And since, for photographers, a significant aspect of aurora science centers on the ability to predict when and where it will appear, I paid special attention to the Kp index: the measure of aurora-causing electromagnetic activity in Earth’s magnetosphere that is the prime focus of most aurora prediction resources.

So, armed with just enough knowledge to be overconfident, and a Kp-based app that validated it, I enjoyed reasonable aurora success in subsequent Iceland visits. But despite this success, and access to Kp forecasts that stretched out 30 days, it didn’t take long to realize that predicting tonight’s aurora activity by Kp-tracking alone is not very reliable—less reliable even, than a weather forecast that says it’s going to rain in 7 days. While there was a clear correlation between high Kp values and an active aurora, I couldn’t figure out why so many high Kp nights disappointed, and low Kp nights dazzled.

What was I missing?

Digging deeper, I saw that my aurora app measured a lot of electromagnetic behavior besides Kp. I’d never really paid a lot of attention to these other cryptic values, but having become pretty comfortable with aurora-science basics, I thought my brain cells might be primed to dig a little deeper. The first thing I learned was that many of these measurements, while significant to solar scientists, aren’t terribly useful to aurora watchers. But I did identify one that is: Bz.

In the simplest terms possible, Bz measures the north/south orientation of the interplanetary magnetic field (IMF) that originates at the sun and propagates outward, eventually interacting with Earth’s magnetic field. Turns out, for predicting auroras, the Bz orientation might just be more important than the Kp index.

In fact, the Bz value can completely make or break an aurora show. Without getting too deep into the scientific weeds (by diving into knowledge that’s far beyond my pay grade), a south-oriented IMF, represented by a negative Bz value, stimulates Earth’s magnetosphere in way that greatly increases the chances for an active aurora; when the IMF is positive (north oriented), the IMF subdues magnetosphere activity and stifles the aurora.

The problem—and likely the reason aurora forecast apps focus mostly on Kp—is that while Kp can be (kind of) predicted days or (more dubiously) weeks ahead, Bz can only be measured, not predicted. The best we can do is park satellites at the gravitationally stable Lagrange Point 1 (L1)—where Earth/Sun gravity balance each other—to monitor the solar wind as far out in space as possible (about 932,000 miles from Earth). Depending on the speed of the solar wind, the IMF can take from 15 to 60 minutes from the time we measure it until affects the magnetosphere and delivers an aurora show (or not).

Though Bz can’t really be predicted, the 15 – 60 minute lag time between measurement and arrival does provide one extra benefit: the ability to see what’s coming in the next hour or so to decide whether or not this would be a good time to pack up and go home, or maybe stick around a little longer.

Applying my new knowledge firsthand

This year’s Iceland Aurora photo workshop was the first opportunity Don Smith and I had to put our Bz knowledge to the test. Regardless of the Kp forecast, we always go out unless the sky is completely covered by clouds, with no hope for clearing. This year we made it out 4 nights, at 3 different locations.

Our first attempt was at Kirkjufell, but the Kp was low and the Bz stayed positive and, as expected, the aurora was never more than a faint green, invisible to our eyes and barely visible in our images. We ended up having a beautiful moonlight shoot at one of the most photogenic mountains in the world, but no real aurora display.

The next night was on Vatnsnes Peninsula in far north Iceland. Despite a similarly low Kp forecast, when we finished dinner and saw stars overhead, we went out aurora chasing again. When we started the aurora was definitely better than the prior night, but nothing spectacular. Then the Bz turned moderately negative about half-way through our shoot—it was as if someone had flipped a switch to give us firsthand demonstration of what a negative Bz can do. The show this night far from the most dynamic that Don and I have seen, but it was very nice—especially for the first-time aurora viewers in our group.

The next night we had clear skies again, so our guide took us to Dimmuborgir Lava Fields. With a network of trails that wind beneath striking volcanic rocks (used in Game of Thrones), this turned out to be a fantastic spot for the northern lights—sadly, despite a pretty good Kp forecast, the Bz didn’t cooperate and the aurora that night, while better than the Kirkjufell show, didn’t come close to the prior night.

Which brings me to the workshop’s final northern lights shoot. With a forecast for clear skies, a decent Kp, and a Bz that had been mostly negative for several hours, we returned to Dimmuborgir with high hopes. From the second we exited our van and saw the lights dancing overhead, I knew we were in for a treat. Since the group had already been here twice—once for that earlier aurora shoot, and then again the next afternoon, Don and I just gave everyone a be-back time and set them free (then stood back to avoid being trampled).

I took a lot of pictures of this spectacle, but had almost as much fun watching everyone’s reaction to it. All the while, I monitored the Bz on my aurora app, further confirming its correlation with the brilliance and spread of the aurora above us.

The show this night might not have been the most spectacular northern lights display I’ve ever seen, but it was definitely in my personal top 5—made even better by a location that provided the best variety of striking foreground subjects I’ve ever had for an aurora. And being able to include the Big Dipper with this scene was an unexpected treat.

Join Don and me for next year’s Circle of Iceland Northern Lights photo workshop (a brand new itinerary)

Workshop Schedule || Purchase Prints || Instagram

The Lights Fantastic

Where in the World is Gary?

Posted on March 23, 2026

You may (or may not) have noticed that my “weekly” blog posts have slowed somewhat in the last month or two. I haven’t gone anywhere—or more precisely, I’m still going the same places and doing the same things I always have, I’m just prioritizing my time differently. After 15 years of stressing, staying up late, missing meals, and in many other ways pushing myself too hard to meet that once-a-week blog goal, I simply decided not to let myself be ruled by arbitrary, self-imposed commitments (I’m a slow learner). I still love writing this blog and have no plans to quit—I’m just going to adjust my time management a bit to emphasize other priorities, especially when my travel schedule starts to take its toll. But anyway…

So exactly where in the world have I been in the last two months? I thought you’d never ask. At the end of January and into early February, I was in Death Valley and the Alabama Hills for my (final) Death Valley workshop; a couple of weeks later I was off to (snowy!) Yosemite for my Horsetail Fall workshop. A storm that dropped record snowfall meant no Horsetail Fall, but seeing Yosemite smothered in white was more than sufficient compensation. A week after that, I jetted off to (snowy and icy) Iceland for Don Smith’s and my annual aurora workshop. Don and I have been doing this trip for many years now, but this year we mixed things up a bit by following a more northerly itinerary. I was home from Iceland for less than 36 hours before making a 13-hour drive to Phoenix for my annual MLB Spring Training trip (go Giants!). Phoenix had record-shattering (for March) highs in the 90s—going from multiple layers of wool and down to shorts, tank tops, and flip-flops was probably the most extreme weather whiplash I’ve ever experienced. And though my Spring Training trip isn’t for photography, I did pack my camera bag because on my drive home I added a day so I could detour through Death Valley to check out the super-bloom (nice, but nothing like the one I witnessed in 2005). I made it home to Sacramento (where our highs are only in the 80s) last Wednesday night, and am looking forward to a 5-week break from the travel.

Thanks to all this recent travel, the only thing in my life accumulating faster than unprocessed images seems to be the dull but essential tasks associated with running a business. Sigh. But I had to process something, so today I’m sharing a northern lights image from the first of two beautiful aurora shows this year’s group enjoyed. The aurora forecast for this night wasn’t great, but the sky was clear (-ish), so despite the late hour, temps in the low 20s (upper teens?), and no specific location in mind, we piled into our spacious Sprinter van decided to go aurora hunting. Why? Because that’s what photographers do.

We were in northern Iceland’s inherently remote Vatnsnes Peninsula, but somehow found an even more remote road and just drove until we liked the view. How remote? We were out there more than two hours and didn’t see a single other car. (I’m pretty sure our guide knew where we were, but no on else had a clue.)

For the first hour or so we had enough green glow to get the aurora newbies excited, but nothing exciting enough to make this grizzled aurora veteran take his camera out. Had I been by myself I might have clicked a frame or two, but I was content to spend my time making sure everyone was ready in the event the activity ramped up. It actually worked out nicely to have a dedicated practice session to get everyone up to speed with the challenges of night photography.

Not long after we told the group we’d give it another 20 or so minutes, a rising, nearly full moon poked through clouds behind us and bathed the snow and rock in moonlight. That was nice, but couple of minutes after the moon’s appearance, almost like magic a green shaft materialized on the northeast horizon and within seconds stretched above our heads to touch the opposite horizon—the real show was on. Soon we were all oooo-ing and ahhhh-ing, spinning around and trying to monitor the ever-changing overhead display—one minute the best show would be in the northeast, the next it would be due west. For the first-timers the priority was the best aurora, regardless of the foreground; those of us with prior aurora successes could afford to be more selective about our foregrounds. Though nothing on the ground out here was spectacular, I liked the view across the road, facing more west and northwest. Wanting to avoid including any road in my frame, I walked to the other side and framed up a small moonlit mountain.

My go-to night photography lens in my 14mm f/1.8—so imagine my surprise after arriving in Iceland to discover that its slot in my camera bag was empty—oh yeah, I took it out right before my Yosemite workshop because we wouldn’t be doing any night photography, then never thought about it again. Oops. If this had been a Milky Way shoot, where every photon counts, I’d have been pretty bummed (understatement). But for a good aurora display, especially one above a moonlight-augmented landscape, f/2.8 is plenty fast. And even though I don’t use my 12-24 f/2.8 a lot, when I do need it I really need it (especially in Yosemite), so it’s a fulltime resident of my camera bag. Which is how I ended up shooting this entire scene at 12mm and f/2.8. It didn’t take long to realize that I appreciated being able to include a little more sky much more than I missed that 1.3 stops of light.

This aurora show was memorable less for its spectacular nature—it was very nice, but didn’t compare to many other northern lights shows Don and I have shared with prior workshop groups—than it was for the fact that it enabled Don and me to breathe a collective sigh of relief, knowing that everyone in our group got to see and photograph the prime reason they signed up for an Iceland winter photo workshop: a legitimate northern lights display.

The next day we traveled to another region farther east, trying for the aurora again that night at a location with a much better foreground. That night we saw a little bit of green, but by then everyone had seen firsthand that it could be much better. What Don and I hadn’t told them after our first aurora success was how much better it could. But before we were done, they learned that for themselves. But that’s a story for a different blog post…

Workshop Schedule || Purchase Prints || Instagram

Celestial Wonders

Click any image to scroll through the gallery LARGE

Category: aurora, Iceland, Moonlight, northern lights, Photography, snow, Sony 12-24 f/2.8 GM, Sony Alpha 1, Vatnsnes Peninsula, winter Tagged: aurora, Iceland, moonlight, nature photography, northern lights, Vatnsnes Peninsula

Too Much of a Good Thing

Posted on March 10, 2026

Greetings from Iceland! And no, despite appearances to the contrary, this image is not Iceland (or even Snowland), it’s Yosemite. (Actually, if you know Iceland, the “not Iceland” giveaway would be all the trees.)

People ask me all the time, what’s the best season to be in Yosemite? While I honestly can’t pick a “best” Yosemite season, I can say that each season in Yosemite offers its own set of good things that distinguish it from the other seasons. Even my least favorite season—yes, I can give you a least favorite Yosemite season—has many good things that I feel fortunate to have witnessed.

My least favorite is easy: summer. Summer is when the crowds swarm every square inch of Yosemite Valley, the waterfalls and meadows dry up, and the sky is chronically blank. But summer is also the only time Yosemite’s high country—Tuolumne Meadows, Olmsted Point, Glacier Point, Sentinel Dome, Taft Point, and the breathtaking High Sierra backcountry—is easily accessible.

While spring is when the tourists start returning to the park after their winter hiatus, it has enough booming waterfalls, fresh green meadows, reflective vernal pools, and ubiquitous dogwood blooms to make the increasing crowds (more than) tolerable. Spring is the Yosemite of postcards and calendar pictures, and probably the best season for first-timers.

In autumn, the now depleted snowpack has completely dried, or at least slowed to a trickle, Yosemite’s heralded waterfalls. But that diminished flow means the low and slow Merced River splits the length of Yosemite Valley like a twisting, reflective ribbon. Adding to these reflections a surprising abundance and variety of fall reds and golds elevates autumn to my personal favorite Yosemite season for creative photography.

That brings me to Yosemite’s most variable of seasons: winter. Come to Yosemite during a dry winter and you’ll find lots of dirt, bare deciduous trees, dry meadows, and unimpressive to nonexistent waterfalls. On the other hand, with the exception of the last couple of weeks in February, Yosemite in winter is refreshingly serene—and even late February’s Horsetail Fall mayhem doesn’t compare to the summer swarms. And even at its worst, winter reflections are quite nice, and it’s still Yosemite (El Capitan, Half Dome, et al haven’t gone anywhere), so I’ll take even the driest Yosemite winter without people over the nicest summer day.

But a Yosemite winter at its best is a sight to behold. Winter is Yosemite’s wet season, making it the best season for capturing a clearing storm. Most of the precipitation in Yosemite Valley falls as rain, but if you’re fortunate enough for your Yosemite visit to coincide with a cold storm that smothers Yosemite Valley in white, you’ll see it at its hands-down most beautiful. And while you may find yourself sharing this beauty with other ecstatic photographers, even the slightest threat of inclement weather seems to repel virtually all tourists.

Falling snow does introduce a host of difficulties that include: limited to impossible access to certain locations, treacherous driving, the potential for chain requirements (usually limited to vehicles without 4WD/AWD), and clouds temporarily shrouding Yosemite’s soaring monoliths and waterfalls. Not to mention the difficulties inherent to photographing in snowy conditions. But if you can overcome these hardships, the payoff is worth it.

The thing is, to witness Yosemite’s fresh-snow majesty, you need to be present among the falling flakes, no matter how cold the temperature or poor the photography. That’s because swirling clouds of a clearing storm vanish so quickly, and the trees start shedding their white coats almost the instant the sun comes out—if you wait until you hear it snowed in Yosemite Valley before rushing to the park, you’re too late. In fact, even if you’re actually present in the park and simply retreat to the shelter of your hotel room or a valley restaurant until the clearing starts, you risk missing some or all of the best stuff.

Living less than four hours from Yosemite Valley, monitoring the Yosemite forecast gives me enough advance notice to get to the park while the snow is still falling. In other words, it’s not by accident that my galleries are filled with so many Yosemite snow images.

But sometimes I just get lucky. Scheduling workshops a year or more in advance means no clue what the conditions will be—the best I can do is try to maximize the chances for something. Horsetail Fall happens in mid to late February, but all the tumblers clicking into place is never guaranteed. Similarly, while I know February is one of the most likely months for snow in Yosemite Valley, no snow is always more likely—but that doesn’t keep me from wishing. (As much as I hope for ideal Horsetail Fall conditions for my workshop—lots of water in the fall and unobstructed sunlight at sunset—I’ll take snow any day.)

This year’s Yosemite Horsetail Fall workshop, which wrapped up just a week before I departed for Iceland, fulfilled those snow dreams many times over. How much snow did we get? Look at the picture above, and consider that it came on our first day, at our second photo location, and that at least two more feet above what you see here fell before the workshop finished.

The compactness of Yosemite Valley, combined with lifetime of Yosemite visits, enables me to adjust my plans on the fly in rapidly changing conditions. On that first afternoon, with a moderate snow falling in the valley I expected poor visibility, so my original plan was to start at Bridalveil Creek, where we could photograph nearby scenes. But when I saw that Bridalveil Fall and El Capitan were still visible despite the falling snow, I headed straight to Valley View.

We enjoyed about 30 minutes of quality photography there before the ceiling dropped and erased everything more than a few hundred yards away. I quickly collected the troops and we beelined up Big Oak Flat Road to Upper Cascade Fall, which I was confident would provide the best combination of photogenic scene that was close enough to still be visible.

I was actually a little surprised to find the top segment of this multitiered waterfall (upper left corner of the image) slightly obscured by the falling snow—fortunately it was visible enough to still be worth photographing. Our biggest challenge turned out to be a strong breeze blowing snow straight down the mountainside and directly onto the front element of any lens trained on the scene.

Normally I shield my camera with an umbrella in rain and snow, but the wind made using an umbrella problematic, so I switched to Plan B and pulled out the large microfiber cloth that lives in my camera bag. While composing, metering, and focusing, I just ignored the snowflakes accumulating on the front of my lens. When everything was ready, I wiped the lens clean, then draped the cloth over it while waiting for my 2-second timer to count down (have I mentioned lately how much I hate Sony’s cable and Bluetooth remotes?), whipping the cloth away at the latest possible instant before the shutter clicked. I continued this way through a series of compositions, until I was confident I’d captured something worthy of processing.

Turns out, this was just the first of many spectacular shoots my group enjoyed. As the workshop continued and we handled every single discomfort and inconvenience the storms served up, all while watching the photography just keep getting better and better, I became more and more convinced that there was no such thing as too much snow in Yosemite, and just kept hoping for more. And more, and more, and more…

On the afternoon before our final day, just as I started believing nothing could go wrong, the National Park Service said, “That’s enough,” and closed the park. I was stunned, and for some reason recalled the time my college baseball team, while on a roadtrip to a distant city, was gorging at an “all you can eat” buffet—until the manager came out and informed us, “That’s all you can eat.”

Even though we were shut out of the park for the workshop’s final day, we still gathered for one last image review on that final day. Based on the images shared, and the excitement everyone had with all of their captures, no one was too disappointed. It was almost as if we all felt that, given what we’d seen so far, to expecting more might just be a little greedy.

I couldn’t agree more. And honestly, despite missing a day and not having access to every location, I have to say this turned out to be one of the most photographically successful workshops in my 20 years leading photo workshops.

Workshop Schedule || Purchase Prints || Instagram

Yosemite Snow

Click any image to scroll through the gallery LARGE

Category: Cascade Creek, snow, Sony 24-105 f/4 G, Sony a7R V, waterfall, winter, Yosemite Tagged: Cascade Creek, nature photography, snow, waterfall, Yosemite

In the Pink

Posted on February 14, 2026

Twilight Wedge and Setting Moon, Mt. Whitney and the Alabama Hills, Eastern Sierra

Sony a7R V

Sony 24-105 G

1/5 second

F/16

ISO 100

The rewards of rising before the sun are many. For me, the opportunity to witness twilight’s soft, cool light slowly warmed by the approaching sun, to breathe in the cleanest air of the day, and to simply be alone with the purest sounds and smells of nature, are ample compensation for whatever chill and sleep deprivation I might experience. And on mornings when the sky is cloudless and the air especially pure, there might just be a bonus.

To collect that bonus, about 20 minutes before sunrise, look for parallel bands of vivid pink and steely blue hugging the horizon opposite the rising sun. Many photographers, myself included, call this early display the “twilight wedge,” but it has other similarly non-scientific names. Closer to the horizon, the dark blue band is actually Earth’s shadow—the final section of sky not receiving any direct light from the soon to appear sun; just above, the pink band is the the day’s first rays of direct sunlight. It’s pink because the sun is just far enough below the eastern horizon that the only its longest, red wavelengths manage to battle through the atmosphere all the way to the other side of the sky. The cleaner the air, the more vivid the twilight wedge’s color. (This color is also possible after sunset, but by day’s end there are usually more color-robbing particles in the air, and Nature’s quiet is often disturbed so much by human activity that some of the magic is lost.) And when towering peaks soar far enough above the viewer’s vantage point that they jut into this pink twilight wedge band, we call it alpenglow.

I love finding beautiful scenes to go with the gorgeous pre-sunrise sky opposite the sun. Near the top of my list is the Alabama Hills, in the shadow of the highest Sierra Nevada peaks. The Alabama Hills are a disorganized jumble of massive, weathered boulders covering many square miles, making an ideal foregrounds for the assortment of serrated peaks looming to the west. A more perfect arrangement for nature photographers couldn’t be assembled—and, as if that’s not enough, the Alabama Hills are also among the best places on Earth to photograph alpenglow.

Of course all this is no secret, and the Alabama Hills has become one of the most popular photography spots in California. To improve my chances of capturing unique images here, I love adding the moon to my Alabama Hills / Sierra Crest scenes. (And when I say “add,” I mean the honorable, old-fashioned way, not with AI or other digital shenanigans.) For years, I’ve timed my Death Valley winter workshop to allow me to take my group to the Alabama Hills, just a 90 minute drive from Death Valley, for the workshop’s final sunset, followed by the moon setting behind the Sierra Crest at sunrise the final morning.

Of course, because there’s only one “best” day in each lunar cycle to photograph a full (-ish) moon setting behind the Sierra Crest, I have no wiggle room when scheduling this workshop—a day early, and the moon is gone before the landscape is light enough to photograph; a day late, and the sky is much too bright by the time the moon drops close to the mountains.

But even nailing the day doesn’t ensure success. Clouds are of course a concern, especially in winter. And each year the timing and position of the moon’s disappearance behind the crest is a little different. In January and early February (when I always schedule this workshop), viewed from my preferred location, the moon sets somewhere between Mt. Whitney and Mt. Williamson (California’s two highest peaks), usually closer to Williamson.

I like to get my groups out to the Alabama Hills about 30 minutes before we can start photographing the moon. The foreground will still be quite dark to our eyes, and the moon will at least 10 degrees above the crest, but I tell my students to start shooting as soon as we arrive.

Using a long exposure in a frame that doesn’t include the moon (the top of the frame is below the moon), captures much more (mostly shadowless) foreground detail than our eyes see, while juxtaposing the mountains’ light gray granite against a sky that’s darker than the peaks—a magnificently striking sight indeed, especially when there’s snow on the peaks. We’ll keep shooting versions of this until it’s time to include the moon in the festivities.

For any moon photography, the darker the sky, the better the moon stands out. But too early and it’s impossible to capture foreground detail without completely blowing out the moon. For me, the time to photograph any setting full moon starts about 20 minutes before sunrise—basically, as soon as the landscape has brightened enough foreground and lunar detail with one click. And if we’re especially lucky, when that time arrives, we’ll find the moon in the midst of a vivid pink twilight wedge.

This opportunity only lasts a few minutes, because as the sky brightens and the foreground exposure gets easier, the color fades and the essential contrast between the sky and moon decreases. The twilight wedge lasts less than 10 minutes, and is followed by slowly warming sky. By maybe five minutes after sunrise, the good color is long gone and the moon/sky contrast has decreased enough for me to put my camera away.

This year’s Alabama Hills moonset was especially nice. One of my favorite things about my Death Valley / Alabama Hills workshop is that we get two “ideal” (moon setting as the color and light are at their best) sunrise moonsets for the price of one. On our last day in Death Valley, from Zabriskie Point we photograph the moon setting behind Manly Beacon, one of Death Valley’s most striking and recognizable features. Because the mountains behind which the moon sets from Zabriskie rise only about 3 degrees above a flat horizon, this moonset happens much closer to the “official” (flat horizon) sunrise that’s universally used for any celestial rise and set. The next morning we’re in the Alabama Hills—even though the moon sets about an hour later than it did the day before, because the Sierra Crest towers about 10 degrees above the horizon when viewed from the Alabama Hills, the actual moonset we see happens at just about the same time as the prior day’s moonset.

Which is exactly things unfolded for this year’s workshop group. We followed up a beautiful Death Valley Zabriskie Point sunrise moonset, with a similarly outstanding Alabama Hills sunrise moonset the next morning. The vivid hues of the twilight wedge had just peaked when I clicked this image. To my eyes, this entire scene (except the moon), and especially the foreground, was much darker than this image shows. But my camera’s ridiculous dynamic range, combined with Lightroom’s masking that allows me to process the sky and foreground independently from each other, enabled me to expose the scene dark enough to capture essential lunar detail, yet remain confident I had enough recoverable detail in the foreground.

Workshop Schedule || Purchase Prints || Instagram

Alabama Hills Gallery

Click any image to scroll through the gallery LARGE

Category: Alabama Hills, Eastern Sierra, full moon, Moon, Mt. Whitney, Photography, Sony 24-105 f/4 G, Sony a7R V Tagged: Alabama Hills, Eastern Sierra, full moon, moon, moonset, Mt. Whitney, nature photography

A Photographer’s Vision

Posted on February 9, 2026

I just returned from a spectacular workshop in Death Valley, one of the most fascinatingly unique locations on Earth. After missing Death Valley last year, it was especially nice to return. (Of course it didn’t hurt that I had a great group that enjoyed fantastic conditions from beginning to end.)

I first got to know Death Valley as a kid, when my family camped there several times over the Christmas school break. We’d spend a most of the week between Christmas and New Year’s Day exploring all kinds of cool stuff that would thrill any young boy: Scotty’s Castle, Rhyolite (a ghost town), and collections of abandoned mining equipment scattered about the desert. We also went to all the standard vistas like Zabriskie Point and Dante’s View, and hiked some of the shorter, most popular trails (Golden Canyon, Mosaic Canyon, Natural Bridge). But with all the cool old stuff, I was much less interested in the scenery and hiking part of those trips, and never really registered Death Valley’s spectacular natural beauty.

About 20 years ago I returned with a camera and saw Death Valley in a completely different way. Suddenly, beauty was everywhere. It would have been easy to—and I probably did—think to myself some version of, “Gee, I don’t remember Death Valley being this beautiful.”

When traveling more with my camera to other childhood family vacation destinations kept eliciting similar epiphanies, I started noticing the way photography was enhancing my overall view of the world. Suddenly, I was seeing the world as a photographer and finding beauty everywhere.

Today, camera or not, my eyes naturally scan my surroundings for scenes, large and small, that resonate personally. Even without a camera, I now seem to unconsciously create compositions in my brain, mentally identifying striking features and their relationships to one another, and figuring out the best way to position myself and frame the scene.

This photographer’s vision isn’t limited to a scene’s physical objects, it also extends to weather and light, both current and potential. What conditions will complement this scene best, and how do I get here to enjoy them? Warm early/late light, moonrise or moonset, fall color, overcast, the Milky Way, a reflection, sunstar—anything that might elevate the scene.

I don’t think this makes me especially unique—in fact I’d venture to guess that many (most?) serious nature photographers view the natural world similarly. And for those who don’t, I believe it’s a quality that can be cultivated with a little conscious practice until it comes naturally.

A great example of putting this mindset to use came the day before this year’s Death Valley workshop, while checking out the conditions at Hell’s Gate on Daylight Pass Road. At the end of an 8-hour drive that started a 7:00 a.m. (to ensure I could get here before dark), I pulled up to Hell’s Gate about 15 minutes before sunset.

I’ve been taking my groups here on my workshop’s first night for many years, but despite that familiarity, there are a few variables I always like to check out for their current status. And with heavy rain earlier this winter washing out many Death Valley roads and locations, I was especially keen to make sure there would be no surprises here.

What I like about Hell’s Gate is that it’s not commonly shot view, and it has a variety of photography options in multiple directions. Directly across the road from the Hell’s Gate parking area is a small mound dotted with photogenic rocks and shrubs that all make nice foregrounds for the long view down the valley toward Telescope Peak and beyond, and west toward pyramid-shaped Death Valley Buttes. There’s even a mini-canyon—7-foot vertical walls and no more than 30-feet long—that can be used to frame the view of the Funeral Mountains to the east and south.

Uphill from this little canyon is a short (100 yards or so) but steep (-ish) trail to an elevated prominence with a similar view. Foreground options up here include more striking rocks, plus an assortment of very photogenic cacti. My favorites are the many clumps of barrel cactus sprinkled around the surrounding slopes. Depending on the year, the condition of the barrel cacti can range from fresh pink with small flowers, to a dried out brown-gray. Though there were no flowers this year, I was happy to see that they were all beautifully pink and alive.

Walking up the trail on this visit, my eyes picked out the best cacti and I started making mental pictures without really realizing it. A little later, visualizing a potential sunstar I took note of exactly when and where the sun would drop behind the nearby buttes and distant Cottonwood Mountains.

Satisfied all was well, I hopped in my car and, instead of making the 30-minute drive to my hotel in Furnace Creek, I added 2 hours to my already long day by detouring to Pahrump so I purchase essential grocery items I’d foolishly left at home. (This is actually an improvement over my prior Death Valley workshop, when I forgot to bring my computer. And in my defense, that’s the only time in my 20 years of leading workshops I’ve done that, and I now triple-check to ensure it never happens again.)

But anyway… When I returned to Hell’s Gate with my group the following evening, I was able to point out all the possibilities and describe exactly what the light would do as the sun dropped. I encouraged everyone to identify the views they like best, as well as foregrounds to put with them, so they wouldn’t be scrambling around looking for shots when the light was at its best. (I’ve noticed that this kind of anticipation doesn’t happen naturally for some people at the start of a workshop, so it’s become a particular point of emphasis.)

On the first shoot of any workshop I try to get around to everyone and therefore rarely shoot, but as the sun dropped and I saw that everyone was quite content, I returned to a composition that I’d identified the prior evening.

Earlier I’d pointed out to my group the very large barrel cactus clump perched on the hillside about 20 feet above the trail, but I think the steep slope covered with loose rock, not to mention lots of easier access compositions nearby, had discouraged them from scaling the hill. So up I went. Reaching my target cactus, I checked out the even larger barrel cactus clump farther up the hill and maybe 20 feet away.

My vision on the first visit was to frame Death Valley Buttes and the sunstar (if the clouds permitted it) with these two cacti; once I was actually in position in front of the closest barrel cactus, I was pleased to confirm that what I’d visualized would in fact work. I just had to tweak my composition to account for the rocks at my feet and clouds near the horizon. The other thing I had to be careful about was my camera bag, which could very easily tumble down the hillside if I didn’t plant it firmly braces and balanced on the rocks.

To deemphasize the (ugly) brown foreground, I dropped my tripod to about a foot above the ground, which made the foreground all about the beautiful cactus and interesting rocks. And though scenes rarely fully cooperate with my goal for clean borders, I took special care to find the best place to cut the rocks at the bottom and sides of the frame, and the clouds at the top.

When I was satisfied with my composition, I picked my focus point—with the closest rocks about 18 inches away, it helped that I already needed to stop way down for the sunstar. Since I wanted everything in this frame sharp, I applied my tried-and-true seat-of-the-pants focus point technique: pick the closest thing that must be sharp (the rocks), then focus a little bit behind it—because focusing on the closest thing gives me sharpness in front I don’t need. (“A little bit” varies with the scene, focal length, f-stop, and subject distance, but the more you do this, the better you get at deciding what “a little bit” is.) I chose f/20 and focused on the close cactus, about 2 feet away.

When the sun reached the horizon, I started with a shutter speed that the balanced black shadows and white highlights as much as possible (knowing I’d be able to recover some of each in processing), and started clicking. After each click, I adjusted my exposure in 2/3 stop increments—first up about 3 stops above my starting point, then back down to 3 stops to below, continuing until the sun disappeared. This gave me a broad range of exposures to choose between on my computer later.

When we were finished, everyone seemed pretty happy with our start. Though I didn’t get a chance to process my own images until after the workshop, from what I saw in the image review, I’d say their excitement was justified.

Workshop Schedule || Purchase Prints || Instagram

A Death Valley Gallery

Category: Death Valley, Hell's Gate, Sony 16-35 f2.8 GM II, Sony Alpha 1, sunstar Tagged: Death Valley, Hell's Gate, nature photography, sunstar

Happy Anniversaries to Me

Posted on January 26, 2026

Rural Lightning Strike, Southeastern Wyoming

Sony a7R V

Sony 24-105 G

.6 seconds

F/8

ISO 800

I just realized that January 2026 marks a couple of milestones for me. Twenty years ago this month, I left my “real” job at Intel (good company, lousy manager) to pursue my dream of becoming a landscape photographer. And 15 years ago this month, I started writing this blog.

Leaving Intel was a leap of faith that, I now know, was far riskier than I believed at the time. That it worked out I attribute more to fortuitous timing than some kind of genius master plan. By the time I left Intel, I’d accumulated a pretty good portfolio of images that I’d printed and sold in weekend art shows. I also had prints in a few local galleries, but print sales alone didn’t generate anywhere near enough money to justify leaving a good job (or for that matter, even leaving a bad job).

My first post-Intel step was to ramp up my art show schedule and upgrade my art show booth lighting and display panels; despite decent art show success ($1000-$4000/weekend, doing the math told me that the time, effort, and relentless (intrastate) travel necessary to earn a fulltime income on the art show circuit would soon suck the joy from photography, and leave precious little time for actual photography. So I concentrated on a handful of quality shows within a 100 mile radius of my Sacramento home, and started looking for other ways to support myself with landscape photography.